<h1>How much of your bloody traffic is actually IPv6? Counting IP families with nftables</h1>

<p>Look, mate, I love IPv6. Addresses the size of a small country, end-to-end connectivity that IPv4 traded away for a fistful of NAT tables, a protocol that's been "about to take over any minute now" since before half the internet's sysadmins started shaving. I've been flying the v6 flag for years.</p>

<p>But when someone asks me <em>"how much of your traffic is actually IPv6?"</em> I used to wave my hands. A lot. Vague gestures toward the dashboard, mumbled excuses about conntrack. No more. Turns out the answer is three lines of nftables per chain, and it's about time I pulled my (well claude's) finger out and generate this data.</p>

<h2>TL;DR</h2>

<ul>

<li>Drop counter-only rules with <code>meta nfproto ipv{4,6}</code> + a descriptive <code>comment</code> at the <strong>top</strong> of each base chain you care about.</li>

<li>Don't trust <code>ct state established,related</code> or nat masquerade counters for bandwidth — they're protocol-agnostic and lossy respectively.</li>

<li>Sum by <code>comment</code> in Prometheus, multiply by 8, call it bps. Divide one sum by another to get a percent. Easy.</li>

<li>Confront your feelings about your actual IPv6 ratio like the grown-up network operator you almost are.</li>

</ul>

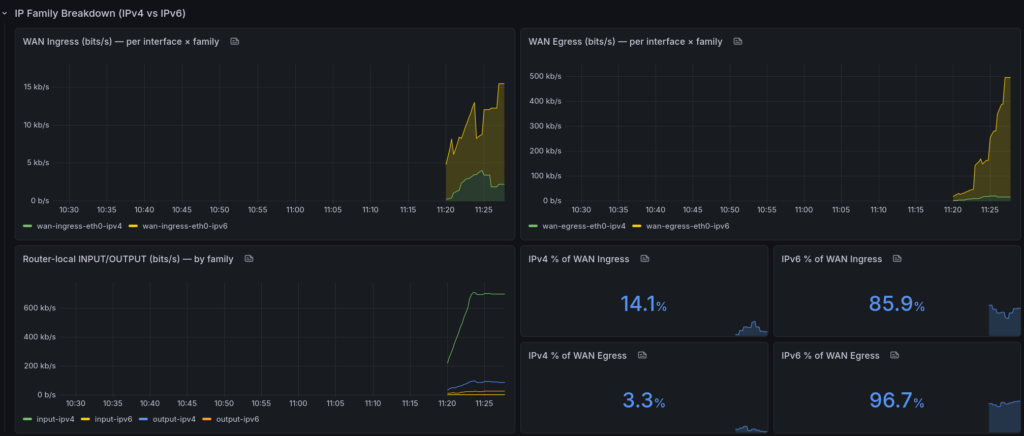

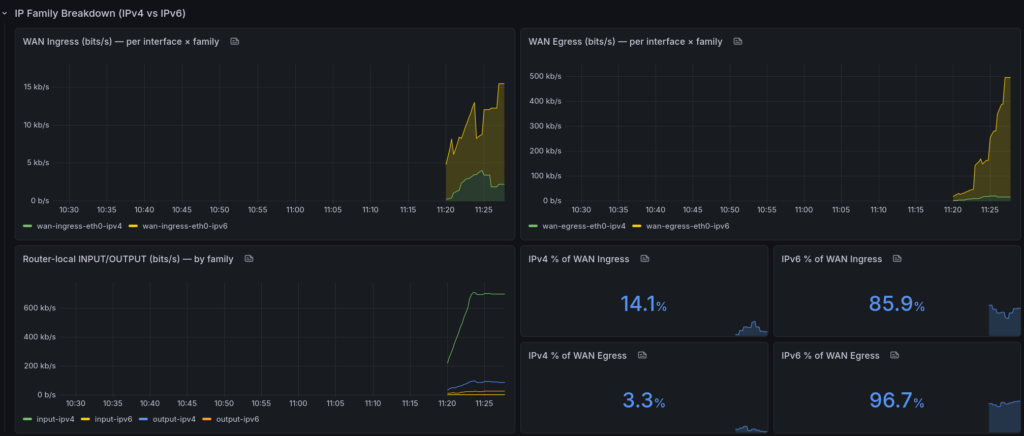

<p><a href="http://cooperlees.com/wp-content/uploads/2026/04/ipv6_dashboard.png"><img src="http://cooperlees.com/wp-content/uploads/2026/04/ipv6_dashboard-1024x436.png" alt="" /></a></p>

<h2>Why the obvious answer doesn't work</h2>

<p>You'd reckon you could just add up the rule counters nftables already maintains, ship them via <a href="https://github.com/metal-stack/nftables-exporter">metal-stack/nftables-exporter</a>, graph them, and call it a day. You'd be wrong. Three problems are waiting for you like a spider in a dunny:</p>

<p><strong>1. <code>ct state established,related counter accept</code> is a bloody black hole.</strong> That single rule catches the overwhelming majority of return traffic — every WAN→LAN byte of a conversation your LAN kicked off. It has no protocol dimension. <code>source_addresses=any</code>. <code>input_interfaces=any</code>. Zero signal. You might as well count clouds.</p>

<p><strong>2. Your other accept rules are protocol-agnostic.</strong> <code>iifname "eth0"</code>, <code>tcp dport 22</code>, <code>iifname "wg0"</code> — they count bytes just fine, but they count them as a smudge of both families. v4 and v6 blurred into a single number. Useless for what we're trying to do.</p>

<p><strong>3. Masquerade counters in the nat table lie.</strong> You might think counting <code>oifname "eth0" ... counter masquerade</code> is a decent proxy for egress. I did too. Then I compared them to my filter FORWARD counters and found the nat numbers were ~1000× smaller. Turns out conntrack fast-paths flows past the nat chain after the first packet — perfect for NAT semantics, absolutely cooked for byte accounting.</p>

<h2>The fix: counter-only rules, at the top of the chain</h2>

<p>nftables has a lovely, underused trick: rules with a <code>counter</code> statement and <strong>no verdict</strong>. They match, increment, and fall through to the next rule. Zero impact on firewall logic. The packet keeps moving. Nothing gets accidentally accepted or dropped.</p>

<p>Combine that with <code>meta nfproto ipv4</code> / <code>meta nfproto ipv6</code> to split families, <code>iifname</code> / <code>oifname</code> to split direction, and a <code>comment</code> that the exporter turns into a Prometheus label. Here's what goes in FORWARD on a router with two WANs (<code>att</code> and <code>astound</code>):</p>

<pre><code class="language-nftables">chain FORWARD {

type filter hook forward priority filter; policy drop;

# --- Family + interface counters. Top of the chain so every packet

# --- gets counted before downstream accept rules terminate eval.

iifname "att" meta nfproto ipv4 counter comment "wan-ingress-att-ipv4"

iifname "att" meta nfproto ipv6 counter comment "wan-ingress-att-ipv6"

oifname "att" meta nfproto ipv4 counter comment "wan-egress-att-ipv4"

oifname "att" meta nfproto ipv6 counter comment "wan-egress-att-ipv6"

iifname "astound" meta nfproto ipv4 counter comment "wan-ingress-astound-ipv4"

iifname "astound" meta nfproto ipv6 counter comment "wan-ingress-astound-ipv6"

oifname "astound" meta nfproto ipv4 counter comment "wan-egress-astound-ipv4"

oifname "astound" meta nfproto ipv6 counter comment "wan-egress-astound-ipv6"

# ... your existing firewall rules stay untouched below ...

ip6 nexthdr icmpv6 counter accept

ip saddr fc00::/7 counter accept

# ... etc.

}</code></pre>

<p>And for INPUT / OUTPUT (router-local traffic), the no-frills version:</p>

<pre><code class="language-nftables">chain INPUT {

type filter hook input priority filter; policy drop;

meta nfproto ipv4 counter comment "input-ipv4"

meta nfproto ipv6 counter comment "input-ipv6"

# ... existing rules ...

}

chain OUTPUT {

type filter hook output priority filter; policy accept;

meta nfproto ipv4 counter comment "output-ipv4"

meta nfproto ipv6 counter comment "output-ipv6"

}</code></pre>

<p><strong>Ordering matters.</strong> Put these at the top. Put them below an accept and they'll never see the packets that were just sent off to their happy path. Yes, that also means you'll count packets that subsequently get dropped by policy — on a sensible home/edge router that's a rounding error and not worth the mental overhead of surgery.</p>

<p>Nat table? Leave it alone. For the reasons in problem #3 above.</p>

<h2>Wiring it up to Prometheus</h2>

<p>If you're already running <a href="https://github.com/metal-stack/nftables-exporter">metal-stack/nftables-exporter</a>, you're done. It emits:</p>

<pre><code>nftables_rule_bytes{family="inet", chain="FORWARD", comment="wan-egress-att-ipv6", ...}

nftables_rule_packets{...same labels...}</code></pre>

<p>Your <code>comment</code> becomes a label. Queries become trivial.</p>

<h2>The maths</h2>

<p>Bytes become bits per second with a rate and a multiply-by-eight, because humans like bps on network graphs:</p>

<pre><code class="language-promql"># Total egress bps through all WAN interfaces on $host, split by family

8 * sum by (comment) (

rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*"}[5m])

)</code></pre>

<p>Stacked area chart, one stripe per WAN × family. Fair dinkum beautiful.</p>

<p>Want the percentage? Classic sum-over-sum:</p>

<pre><code class="language-promql"># IPv6 as a percent of WAN egress on $host

100 *

sum(rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*-ipv6"}[5m]))

/

sum(rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*"}[5m]))</code></pre>

<p>Swap <code>egress</code> for <code>ingress</code> to look at the other direction. Swap <code>ipv6</code> for <code>ipv4</code> if you want to stare at the number that refuses to die.</p>

<h2>What the numbers actually said</h2>

<p>Here's what my home1 router (two WANs, <code>att</code> primary, <code>astound</code> backup) was counting a few minutes after I rolled this out. Cumulative bytes since the rules loaded:</p>

<pre><code> wan-ingress-att-ipv6 3,671,749 bytes (3.5 MB)

wan-ingress-att-ipv4 1,650,060 bytes (1.57 MB)

wan-egress-att-ipv6 9,155,663 bytes (8.73 MB)

wan-egress-att-ipv4 8,451,381 bytes (8.06 MB)

wan-ingress-astound-* 0 (idle backup)

wan-egress-astound-* 0</code></pre>

<p>Put those through the formula:</p>

<ul>

<li><strong>IPv6 % of WAN ingress (home1):</strong> 3,671,749 / (3,671,749 + 1,650,060) × 100 ≈ <strong>~69 %</strong></li>

<li><strong>IPv6 % of WAN egress (home1):</strong> 9,155,663 / (9,155,663 + 8,451,381) × 100 ≈ <strong>~52 %</strong></li>

</ul>

<p>Now <em>that's</em> more like it. A clear v6 majority inbound — which makes sense, big content providers (Google, Facebook, Netflix, Cloudflare) all serve v6 by default, and any modern client behind my router will happy-eyeball its way onto the v6 path every time. Egress is closer to a coin flip because there's still a long tail of outbound connections to services that refuse to put AAAA records on their hostnames. You know who you are (Discord, Github, Steam ...).</p>

<p>And over at my home2 site, where I run a Hurricane Electric IPv6 tunnel because my ISP there thinks IPv6 is a mythological creature:</p>

<p>The other end of the spectrum: my home2 site, where I run a Hurricane Electric IPv6 tunnel because my ISP there thinks IPv6 is a mythological creature:</p>

<ul>

<li><strong>IPv6 % of WAN egress (home2):</strong> around <strong>83 %</strong></li>

</ul>

<p>Why the difference? Because the handful of boxes behind home2 happy-eyeball their way to v6 first, and the moment the tunnel's healthy that's what they ride. Which is how the web should bloody well work everywhere.</p>

<h2>Why not sFlow, NetFlow, eBPF, etc.?</h2>

<p>Fair question. If you want per-host, per-AS, per-L7-protocol deep telemetry, go reach for sFlow via hsflowd or roll an eBPF exporter with XDP + TC hooks (<a href="https://github.com/cloudflare/ebpf_exporter">cloudflare/ebpf_exporter</a> has the bones). I looked at all of them for this.</p>

<p>For "IPv4 vs IPv6 per WAN", nftables counters win on three axes:</p>

<ul>

<li><strong>Accuracy.</strong> Every byte, no sampling. sFlow's 1-in-1000 is great for top-N talkers, useless for "is this 12 % v6 or 15 %".</li>

<li><strong>Overhead.</strong> A pointer deref per packet. You can't really beat it.</li>

<li><strong>Moving parts.</strong> Zero new daemons. You already run the exporter. You already scrape it. You already have a dashboard.</li>

</ul>

<p>If you want the richer story (who, what app, which AS), add sFlow/eBPF <strong>alongside</strong> this — don't replace it. They answer different questions.</p>

<p>Three rows on my nftables Grafana dashboard:</p>

<ul>

<li><strong>Generic nftables</strong> — the default per-chain packets/sec panels you probably already had lying around.</li>

<li><strong>Logs (loki)</strong> — panels for my drop logs, separate row so they stop cluttering the metrics above.</li>

<li><strong>IP Family Breakdown</strong> — the new kids. Stacked WAN ingress bps, stacked WAN egress bps, a per-family router-local panel, and a 2×2 grid of stat panels for IPv4 % / IPv6 % on each direction.</li>

</ul>

<p>The <code>$host</code> variable uses <code>label_values(nftables_rule_bytes, instance)</code> so every router/VPS in the fleet shows up automatically — I add an exporter, the dropdown grows, dashboard works. No surgery required. If you want pretty display names instead of <code>home1v6:9630</code>, Grafana's regex extract will do it, but be careful: some versions of Grafana rewrite the <em>value</em> as well as the <em>text</em> when you use <code>(?<text>…)</code>, and your panels go silent. Ask me how I know.</p>

<p>I will try to upload my dashboard, grafana's down at the moment as I write this ... Will edit and link once up.</p>

<p>Cheers.</p>

How much of your bloody traffic is actually IPv6? Counting IP families with nftables

Look, mate, I love IPv6. Addresses the size of a small country, end-to-end connectivity that IPv4 traded away for a fistful of NAT tables, a protocol that's been "about to take over any minute now" since before half the internet's sysadmins started shaving. I've been flying the v6 flag for years.

But when someone asks me "how much of your traffic is actually IPv6?" I used to wave my hands. A lot. Vague gestures toward the dashboard, mumbled excuses about conntrack. No more. Turns out the answer is three lines of nftables per chain, and it's about time I pulled my (well claude's) finger out and generate this data.

TL;DR

- Drop counter-only rules with

meta nfproto ipv{4,6} + a descriptive comment at the top of each base chain you care about.

- Don't trust

ct state established,related or nat masquerade counters for bandwidth — they're protocol-agnostic and lossy respectively.

- Sum by

comment in Prometheus, multiply by 8, call it bps. Divide one sum by another to get a percent. Easy.

- Confront your feelings about your actual IPv6 ratio like the grown-up network operator you almost are.

Why the obvious answer doesn't work

You'd reckon you could just add up the rule counters nftables already maintains, ship them via metal-stack/nftables-exporter, graph them, and call it a day. You'd be wrong. Three problems are waiting for you like a spider in a dunny:

1. ct state established,related counter accept is a bloody black hole. That single rule catches the overwhelming majority of return traffic — every WAN→LAN byte of a conversation your LAN kicked off. It has no protocol dimension. source_addresses=any. input_interfaces=any. Zero signal. You might as well count clouds.

2. Your other accept rules are protocol-agnostic. iifname "eth0", tcp dport 22, iifname "wg0" — they count bytes just fine, but they count them as a smudge of both families. v4 and v6 blurred into a single number. Useless for what we're trying to do.

3. Masquerade counters in the nat table lie. You might think counting oifname "eth0" ... counter masquerade is a decent proxy for egress. I did too. Then I compared them to my filter FORWARD counters and found the nat numbers were ~1000× smaller. Turns out conntrack fast-paths flows past the nat chain after the first packet — perfect for NAT semantics, absolutely cooked for byte accounting.

The fix: counter-only rules, at the top of the chain

nftables has a lovely, underused trick: rules with a counter statement and no verdict. They match, increment, and fall through to the next rule. Zero impact on firewall logic. The packet keeps moving. Nothing gets accidentally accepted or dropped.

Combine that with meta nfproto ipv4 / meta nfproto ipv6 to split families, iifname / oifname to split direction, and a comment that the exporter turns into a Prometheus label. Here's what goes in FORWARD on a router with two WANs (att and astound):

chain FORWARD {

type filter hook forward priority filter; policy drop;

# --- Family + interface counters. Top of the chain so every packet

# --- gets counted before downstream accept rules terminate eval.

iifname "att" meta nfproto ipv4 counter comment "wan-ingress-att-ipv4"

iifname "att" meta nfproto ipv6 counter comment "wan-ingress-att-ipv6"

oifname "att" meta nfproto ipv4 counter comment "wan-egress-att-ipv4"

oifname "att" meta nfproto ipv6 counter comment "wan-egress-att-ipv6"

iifname "astound" meta nfproto ipv4 counter comment "wan-ingress-astound-ipv4"

iifname "astound" meta nfproto ipv6 counter comment "wan-ingress-astound-ipv6"

oifname "astound" meta nfproto ipv4 counter comment "wan-egress-astound-ipv4"

oifname "astound" meta nfproto ipv6 counter comment "wan-egress-astound-ipv6"

# ... your existing firewall rules stay untouched below ...

ip6 nexthdr icmpv6 counter accept

ip saddr fc00::/7 counter accept

# ... etc.

}

And for INPUT / OUTPUT (router-local traffic), the no-frills version:

chain INPUT {

type filter hook input priority filter; policy drop;

meta nfproto ipv4 counter comment "input-ipv4"

meta nfproto ipv6 counter comment "input-ipv6"

# ... existing rules ...

}

chain OUTPUT {

type filter hook output priority filter; policy accept;

meta nfproto ipv4 counter comment "output-ipv4"

meta nfproto ipv6 counter comment "output-ipv6"

}

Ordering matters. Put these at the top. Put them below an accept and they'll never see the packets that were just sent off to their happy path. Yes, that also means you'll count packets that subsequently get dropped by policy — on a sensible home/edge router that's a rounding error and not worth the mental overhead of surgery.

Nat table? Leave it alone. For the reasons in problem #3 above.

Wiring it up to Prometheus

If you're already running metal-stack/nftables-exporter, you're done. It emits:

nftables_rule_bytes{family="inet", chain="FORWARD", comment="wan-egress-att-ipv6", ...}

nftables_rule_packets{...same labels...}

Your comment becomes a label. Queries become trivial.

The maths

Bytes become bits per second with a rate and a multiply-by-eight, because humans like bps on network graphs:

# Total egress bps through all WAN interfaces on $host, split by family

8 * sum by (comment) (

rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*"}[5m])

)

Stacked area chart, one stripe per WAN × family. Fair dinkum beautiful.

Want the percentage? Classic sum-over-sum:

# IPv6 as a percent of WAN egress on $host

100 *

sum(rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*-ipv6"}[5m]))

/

sum(rate(nftables_rule_bytes{instance="$host", comment=~"wan-egress-.*"}[5m]))

Swap egress for ingress to look at the other direction. Swap ipv6 for ipv4 if you want to stare at the number that refuses to die.

What the numbers actually said

Here's what my home1 router (two WANs, att primary, astound backup) was counting a few minutes after I rolled this out. Cumulative bytes since the rules loaded:

wan-ingress-att-ipv6 3,671,749 bytes (3.5 MB)

wan-ingress-att-ipv4 1,650,060 bytes (1.57 MB)

wan-egress-att-ipv6 9,155,663 bytes (8.73 MB)

wan-egress-att-ipv4 8,451,381 bytes (8.06 MB)

wan-ingress-astound-* 0 (idle backup)

wan-egress-astound-* 0

Put those through the formula:

- IPv6 % of WAN ingress (home1): 3,671,749 / (3,671,749 + 1,650,060) × 100 ≈ ~69 %

- IPv6 % of WAN egress (home1): 9,155,663 / (9,155,663 + 8,451,381) × 100 ≈ ~52 %

Now that's more like it. A clear v6 majority inbound — which makes sense, big content providers (Google, Facebook, Netflix, Cloudflare) all serve v6 by default, and any modern client behind my router will happy-eyeball its way onto the v6 path every time. Egress is closer to a coin flip because there's still a long tail of outbound connections to services that refuse to put AAAA records on their hostnames. You know who you are (Discord, Github, Steam ...).

And over at my home2 site, where I run a Hurricane Electric IPv6 tunnel because my ISP there thinks IPv6 is a mythological creature:

The other end of the spectrum: my home2 site, where I run a Hurricane Electric IPv6 tunnel because my ISP there thinks IPv6 is a mythological creature:

- IPv6 % of WAN egress (home2): around 83 %

Why the difference? Because the handful of boxes behind home2 happy-eyeball their way to v6 first, and the moment the tunnel's healthy that's what they ride. Which is how the web should bloody well work everywhere.

Why not sFlow, NetFlow, eBPF, etc.?

Fair question. If you want per-host, per-AS, per-L7-protocol deep telemetry, go reach for sFlow via hsflowd or roll an eBPF exporter with XDP + TC hooks (cloudflare/ebpf_exporter has the bones). I looked at all of them for this.

For "IPv4 vs IPv6 per WAN", nftables counters win on three axes:

- Accuracy. Every byte, no sampling. sFlow's 1-in-1000 is great for top-N talkers, useless for "is this 12 % v6 or 15 %".

- Overhead. A pointer deref per packet. You can't really beat it.

- Moving parts. Zero new daemons. You already run the exporter. You already scrape it. You already have a dashboard.

If you want the richer story (who, what app, which AS), add sFlow/eBPF alongside this — don't replace it. They answer different questions.

Three rows on my nftables Grafana dashboard:

- Generic nftables — the default per-chain packets/sec panels you probably already had lying around.

- Logs (loki) — panels for my drop logs, separate row so they stop cluttering the metrics above.

- IP Family Breakdown — the new kids. Stacked WAN ingress bps, stacked WAN egress bps, a per-family router-local panel, and a 2×2 grid of stat panels for IPv4 % / IPv6 % on each direction.

The $host variable uses label_values(nftables_rule_bytes, instance) so every router/VPS in the fleet shows up automatically — I add an exporter, the dropdown grows, dashboard works. No surgery required. If you want pretty display names instead of home1v6:9630, Grafana's regex extract will do it, but be careful: some versions of Grafana rewrite the value as well as the text when you use (?<text>…), and your panels go silent. Ask me how I know.

I will try to upload my dashboard, grafana's down at the moment as I write this ... Will edit and link once up.

Cheers.